How does search engine work?

The search engine exists to show the most relevant results that the people are searching over the internet, so firstly it needs to discover the internet’s content.

For that the content should be first visible to the search engine, it should understand the content first and then it organizes.

That is why SEO is important, SEO helps the search engine to identify and process your content to show in search engine results.

There are main three functions in the search engine.

- Crawling

- Indexing

- Ranking

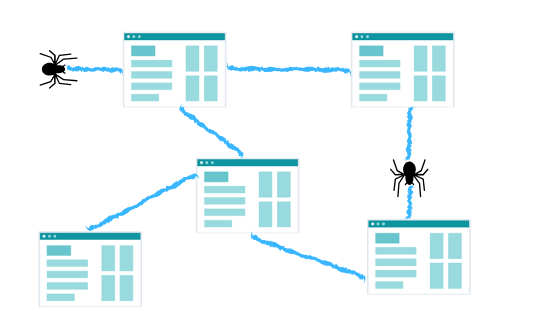

So what is Google crawling?

Crawling is the process of discovering, the search engine send’s google bots known as spiders to discover the new and updated content.

It discovers the new content by the links, all the links in our blog are in the sitemaps we create, it is important to create sitemaps so that search engine finds our web pages or websites and looks deeply into it.

What is google indexing?

In simple terms, it is the process where the discovered content or web pages are stored in the Google index.

After your site has been crawled, the next action it will take to index the site or web pages, while indexing, the search engine inspects that page’s contents.

Every single information is stored in its index.

It is very important that only essential parts or content of your website be indexed for achieving a higher rank in search engine.

Search engine ranking:

This is when a user searches for something in the search bar, the search engine looks in their index for highly relevant content and presents that content in the form of search result.

Arranging this search results accordingly in relevance manner is called as ranking.

Main factors in google crawl and google index:

There are millions of websites and those contain many web pages, most people or the site admin are not pleased with the crawling and indexing rate?

Following are the vital factors which play a significant role in Google crawling and indexing.

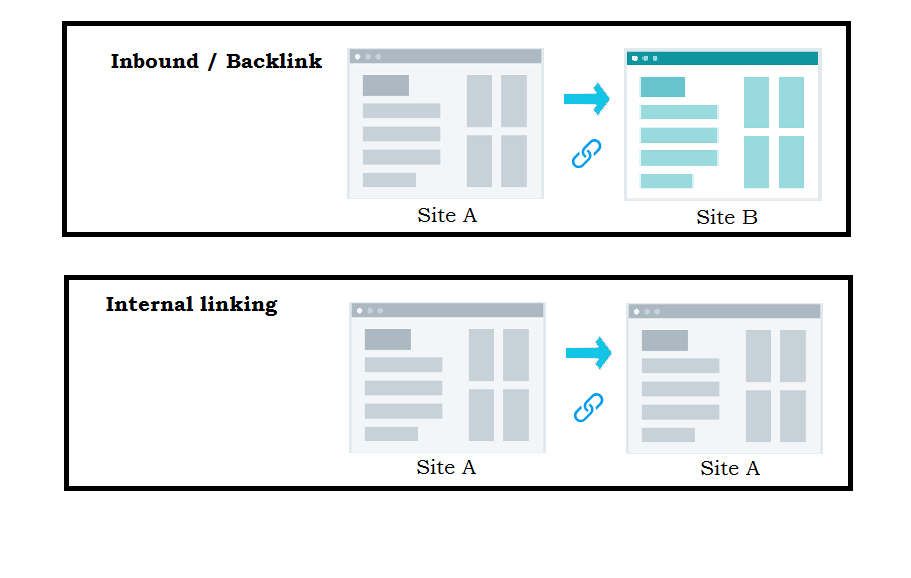

Backlinks :

The more number of backlinks you have, the search engine assumes the more reliable and well known your site is.

Even if your content value is good and have a good ranking in the search results but the sire is not earning any backlinks, the search engine will straight away will assume that your sites have low-quality content.

Internal linking:

There numerous debates on internal linking, important point to consider are that internal linking is a good implementation, for keeping the user on your site.

It is even encouraged by people to use the same anchor text in the same article this will help in deep google crawling of a website.

Domain Name:

It is important to have a domain name which is similar to your website because its importance has been rising significantly.

Using the domains that will have main keywords is of most important.

Duplicate content:

Never use duplicate content on your website, never use repeated paragraphs in your content of your website.

These kinds of practices are banned by Google.

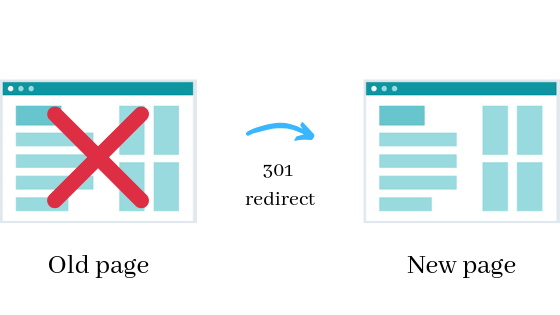

301 and 404 re-direct:

301(permanent) redirect is a way that tells people who are searching and search engine that the site page has been permanently moved.

In this 301 status code, it is important that you 301 redirect it to a URL with similar content.

For example :

if a web page has a good ranking for its search term query and you 301 redirect it to a URL with different content, there are chances that the page rank will be dropped because the content which made it relevant to the particular search term query is no longer available there.

When you implement 301 redirect it transfer of link equity from the old pages to the new location URL.

And the user will get to the relevant search pages of the new location, instead of the old location where they will find error pages instead.

Also helps in Google to find new location pages for indexing.

So just avoid redirecting your URLs to irrelevant pages. Use it where the page is at a new location, So use it responsibly.

There is also the option of 302 redirecting a page but for a temporary purpose.

404 – not found:

404 – not found an error, this happens when the specific page has been deleted or URL is missing or Link is broken.

The requested URL may contain the wrong syntax so when the arrives at the 404- not found page he gets annoyed and leaves.

And when the search engine gets the 404 – not found that means it cannot access the URL.

XML Sitemap :

As soon as you create a website, the first thing you are recommended is to use XML sitemap.

So that Google is informed that your website is updated and then it will crawl it.

The Google crawler discovers your site and indexes your site content through the list of URLs that are present on your site.

if your site is not linked to any other site, you can still get it indexed by submitting your XML sitemap in Google Search Console.

Please make sure that you only include URLs that you want to index by a search engine, don’t include URL in the sitemap that you have blocked by robots.txt.

Robots.txt:

Robots.txt recommends that which part of the website should be crawled or not. It is located inside the root directory of the website.

Googlebot first should encounter robots.txt file, then it comes to know which part should be crawled and which shouldn’t.

If the Googlebot finds an error when accessing the site’s robots.txt file and can’t conclude, it doesn’t crawl the site.

If the Googlebot can’t find a robots.txt file for that site, it will begin to crawl the site.

If the Googlebot finds a robots.txt for that site, it will look inside the file for the instructions and then begins to crawl the site as per suggestions.

Crawl budget:

It is the average number of URLs that the Googlebot crawls on the website, so it is important to optimize the crawl budget so that the Googlebot will not waste any time crawling through the pages which are not important.

Don’t block the crawler from accessing the pages. If you do it by using noindex tags or canonical tags, the Googlebot will be blocked from the page and will not be able to see the instruction present on the page.

It is important to have a crawl budget means to set instructions in robots.txt to crawl certain pages only, otherwise what will happen is the crawler will keep crawling unimportant pages and will ignore your important pages and leave the site.

Crawl budget is important for the sites which are very large and has thousands of URLs.

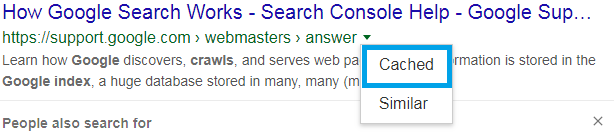

Googlebot crawler:

Google Crawling will crawl the webpages at different intervals.

Well-known sites which post content most frequently will be crawled frequently than which don’t post frequently.

A cached record of the page signifies a snap of the last time Googlebot crawled the site.

Google Ranking:

Google’s core algorithms to bring relevant results for the user’s search query.

it updates its algorithms every now and to improve the quality of the search results. it also makes significant changes in their algorithms because they want to improve the search quality.

With the constant improving the algorithm factor, we also know there are changes in SEO in the past years.

As the search engine got knowledgeable through the years, it learned the language of the people who are searching for something on the search engine.

Before that it would be just keyword stuffing, SEO was simple people used to use keywords as many times possible, make some font changes and hoping the post to get in the rank.

In that context the content which people shared was so terrible for the user experience, it was hard to make sense out of it.

But the search engine wanted the user experience to be great, quality search results. So the SEO came into play.

Links in SEO:

We all know that the inbound links or the Backlinks are the links we get from another website.

Another type of link is internal links that are shared on the same site.

Links have played a historical part in the SEO.

Search engine from early-stage has known that the links from high authority or trustworthy site are able to figure out how to rank the search results.

Backlinks are considered as the word of mouth publicity.

Click here to know more about SEO

Let’s consider an example:

If you have a website and you provide valuable content, your content makes sense and helpful for people. They would like to come to your sites again and again.

They will refer the sites to other people.

So the referrals from other people = good sign of pages authority.

If you pay people to come to your site.

Irrelevant people come to your = can be flagged as spam.

You yourself claim that your site is best.

Referrals from yourself= not a good sign.

Let people talk about it if your content is good people will come.

That’s the reason page rank was created. Page rank is a part of Google’s core algorithm. So the Page rank determines, the importance of web page by evaluating both quality and quantity of links mentioning of it.

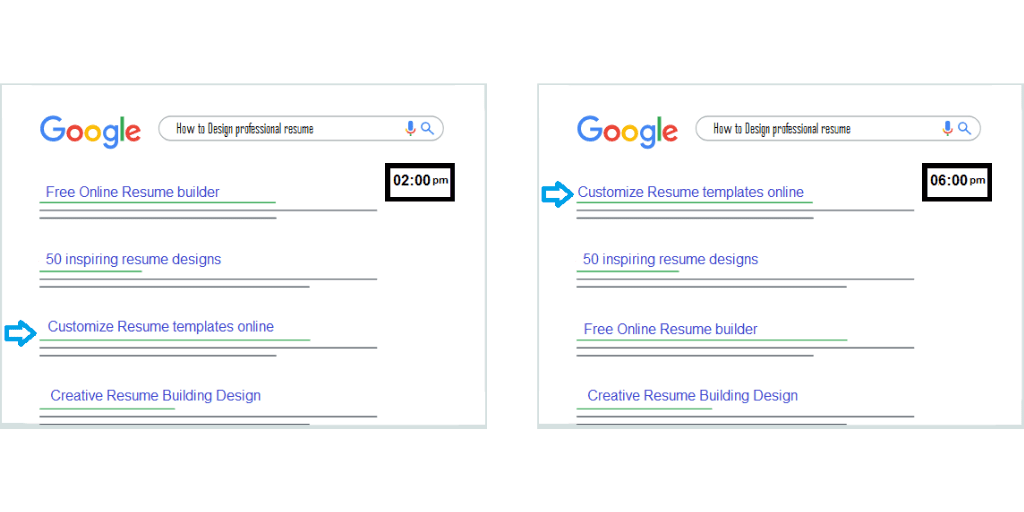

RankBrain:

It is a machine learning program of the Google search engine algorithm, its main operation is to process the search results and deliver more relevant search results as per the user’s query.

When RankBrain notices a word or phrase it is not familiar with, it starts to guess what words or phrase will have a similar meaning and accordingly presents the search results.

As it is machine learning, it keeps improving from the new data and observations to provide better search results.

Google RankBrain is the most important component in the ranking algorithm after link and content.

RankBrain has helped Google to boost its speed for the testing of the algorithm, which it does for the keyword categories in order to choose the best content for the search term query of the user.

If the user has entered a query and in the search results a lower ranking URL is offering better results than higher ranking URL, then RankBrain will modify the results by putting the lower ranking URL in the place of higher URL ranking.

As a result of which the user will get the highly relevant content.

Engagement metrics:

Engagement metrics show us how users connect with your site from the search result they get.

- Time on page – the amount of time the user spends on-page.

- Bounce rate – it is the percentage of visitors who enter the site and leave the site without continuing other pages of the same site.

- Clicks – users who visit from search results.

- Pogo-sticking – when the user clicks on the search results then the link opens and then clicks back and goes back to the search results.

The overall motive is Google wants to deliver the best quality results and most relevant.